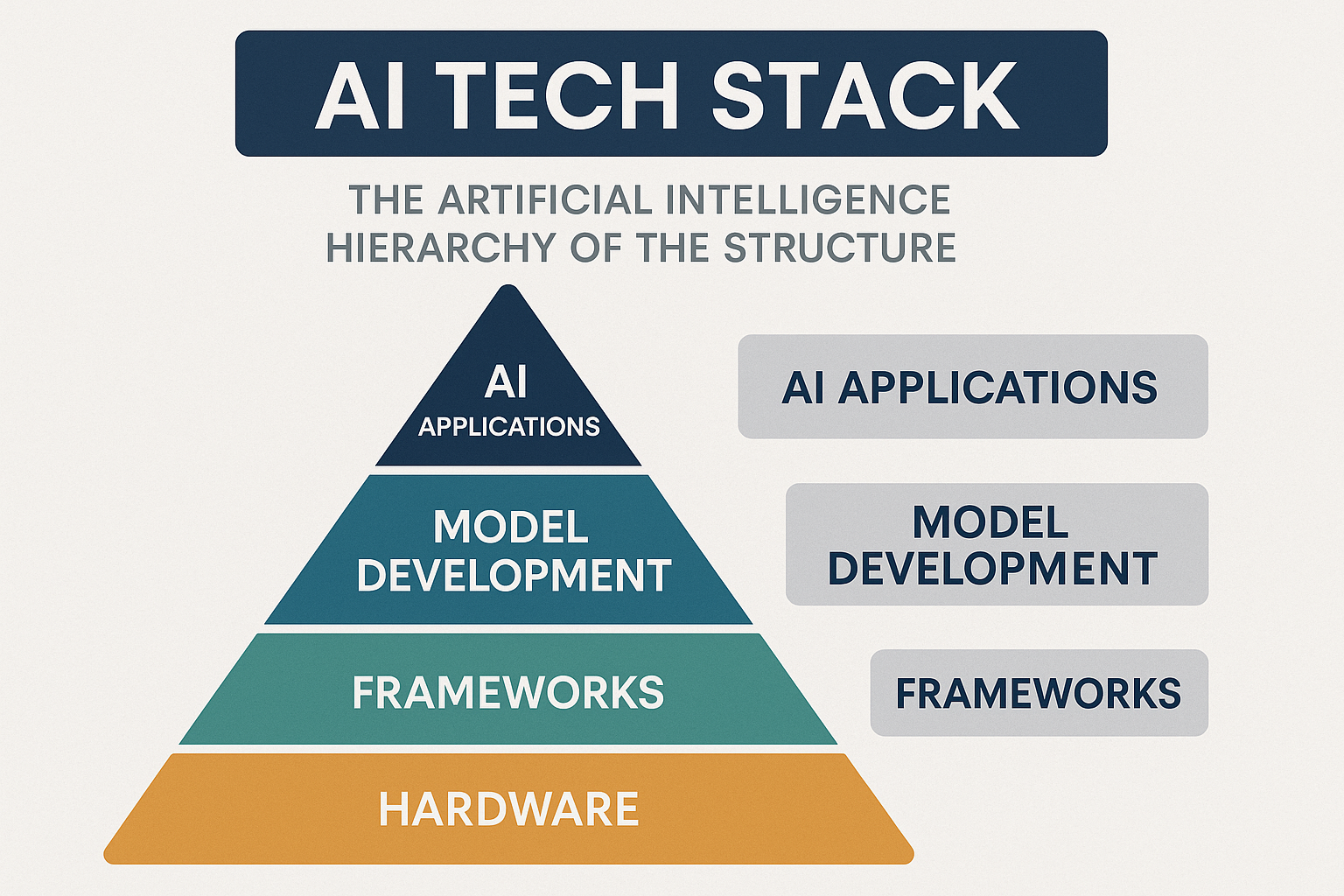

AI Tech Stack

The “AI tech stack” is a conceptual framework that describes the layers of technology needed to build and deploy an AI system, from the foundational hardware to the end-user application. The term “Artificial Intelligence Hierarchy of the Structure” is not a standard industry term, but it can be understood as a synonym for the AI tech stack, representing the tiered system of components working together. The AI stack can be broken down into six main layers. This hierarchy ensures that a system is scalable, efficient, and capable of delivering complex AI capabilities.

Affiliate Disclosure: This post may contain affiliate links, which means I may receive a commission if you click on a link and purchase something that I have recommended. This comes at no additional cost to you, but helps support this blog so I can continue providing valuable AI insights. I only recommend products I believe in. Thank you for your support!

Computing Hardware

At the very bottom of the AI stack is the computing hardware, the muscle that does all the heavy lifting. While central processing units (CPUs) handle general tasks, AI needs specialised processors that can perform thousands of calculations at once.

The most famous of these are Graphics Processing Units (GPUs), like those made by NVIDIA. Originally designed for rendering video games, GPUs are incredibly good at parallel processing, which means they can perform many computations simultaneously. This makes them perfect for training AI models. There are also custom-built chips like Google’s Tensor Processing Units (TPUs), which are specifically optimised for machine learning workloads. For even more specialised tasks, companies are now creating Application-Specific Integrated Circuits (ASICs), which are hardware components designed for one purpose, offering maximum efficiency for a specific AI task. NPUs (Neural Processing Units): These are specialised processors designed for AI tasks, often integrated into consumer devices like smartphones to enable on-device AI functions with low power consumption.

For example, when a company trains a massive AI model like GPT-4, they don’t use a single computer. They use a cluster of thousands of powerful GPUs working in tandem for weeks or even months. This is a monumental effort, and it’s why these chips are in such high demand.

As NVIDIA’s CEO Jensen Huang put it, “The more powerful the GPU, the faster the progress of AI. And the more powerful the AI, the faster the progress of GPUs.”

This article is part of our comprehensive AI education. For more foundational knowledge, read our previous posts on AI Basics and Machine Learning Explained and AI Ethics: Navigating the Moral Challenges of Intelligent Machines.

Cloud Platforms

Training and running AI models requires a tremendous amount of computational power and data storage. This is where cloud platforms come in. Companies like Amazon Web Services (AWS), Google Cloud Platform (GCP), and Microsoft Azure provide the infrastructure needed to build and deploy AI at scale.

These platforms offer a pay-as-you-go model, giving businesses access to powerful GPUs and TPUs without having to buy and maintain their own expensive data centres. Beyond just raw computing power, cloud providers offer a full suite of managed services. This includes tools for setting up and managing data pipelines, training models, and deploying them to production. For instance, Google’s Vertex AI and Microsoft’s Azure AI provide a complete ecosystem, with everything from data management tools to pre-built models and deployment pipelines, making it much easier for companies to get started with AI. The cloud has democratised access to AI, allowing smaller businesses and researchers to compete with tech giants.

Foundational Models

The heart of many modern AI systems lies in foundational models. These are vast, general-purpose models that are trained on huge amounts of data and can be adapted for a wide variety of tasks. You can think of them as the brain of an AI application.

The most well-known are Large Language Models (LLMs) like OpenAI’s GPT series, Google’s Gemini, or Anthropic’s Claude, which are trained on text data and can understand, generate, and summarise human language. But foundational models aren’t just for text. Other examples include models that can generate images (like Stability AI’s Stable Diffusion), understand and process audio (like OpenAI’s Whisper), or even control robots. The power of foundational models comes from a technique called transfer learning, where a model learns general knowledge from a massive dataset and can then be fine-tuned with a smaller, more specific dataset to solve a new problem. This saves a huge amount of time and resources.

For instance, an e-commerce company can use a foundational model to power a customer service chatbot. Instead of building the model from scratch, they can fine-tune an existing LLM with their product information and customer service history. This allows them to create a highly accurate and helpful bot much faster.

Foundational models can be adapted for many different tasks, which is far more efficient than building a new model from scratch.

-

Large Language Models (LLMs): These are foundational models trained on text and code, enabling them to understand and generate human-like language. Products like OpenAI’s GPT, Google’s Gemini, and Anthropic’s Claude are all foundational models.

-

Computer Vision Models: Models trained to understand and process images.

-

Multimodal Models: Models that can handle multiple data types, such as text and images.

Infra Optimisation

With the enormous costs and energy consumption associated with AI, infrastructure optimisation has become a critical layer. This involves a set of practices and tools designed to make the entire system more efficient and cost-effective.

Techniques like quantisation, for example, reduce the size of a model by lowering the precision of its data points (e.g., from 32-bit to 8-bit), so it can run faster and with less memory without a significant drop in performance. Another key method is model pruning, where unnecessary connections in a neural network are removed to create a smaller, more efficient model. Knowledge distillation involves training a smaller “student” model to mimic the behaviour of a larger, more complex “teacher” model. These methods are essential for deploying powerful AI on less powerful hardware, such as on a smartphone or a smart home device.

Google has famously used machine learning itself to optimise its data centres, reducing the cooling energy they use by a significant amount. This kind of self-optimisation is key to making AI scalable and more sustainable.

Data Layer

Behind every intelligent AI is a well-organised and accessible data layer. This is the foundation upon which all models are built and trained. The data layer includes everything from databases and data warehouses to data lakes and real-time streaming services. It’s the circulatory system of the AI world, ensuring the right information gets to the right place at the right time.

For an AI system to be useful, it needs high-quality, clean, and relevant data. An AI-powered personalised recommendation system, for instance, relies on a data layer that stores vast amounts of user behaviour, purchase history, and product information. Data governance and data quality are paramount here; if the data is inaccurate or biased, the AI model will be too. This data is constantly being collected, processed, and fed to the models to improve their accuracy.

-

Data Pipelines: These are automated processes for ingesting raw data from various sources.

-

Data Storage: This includes solutions like data lakes (for raw, unstructured data) and data warehouses (for organised, structured data).

-

Data Quality: Ensuring data is clean, relevant, and unbiased is paramount. Flaws in this layer can lead to flawed and unreliable AI models.

A good example is Spotify’s recommendation engine. It’s built on a data layer that tracks every song you listen to, skip, and add to a playlist. This rich, real-time data is then used to train the models that suggest new music you might like.

Front-End Applications

Finally, the front-end applications are what we, the users, actually interact with. This is where all the hard work from the previous layers comes together in a seamless and intuitive user experience.

Think about a simple mobile app that can identify objects in a photo you take. The application itself is the front-end. When you take the photo, the application uses an API (Application Programming Interface) to send that image to a back-end server, where a pre-trained foundational model (like a computer vision model) processes it and sends back the result. The app then displays the name of the object on the screen.

Vercel’s v0 is an excellent example in this space. It’s a tool for front-end developers that uses AI to generate user interface (UI) code from a simple text description. It dramatically speeds up the process of creating a website or app, turning ideas into functional code with just a few words. Other front-end applications include AI-powered medical diagnosis tools that assist doctors in analysing scans or generative design software used by engineers to create new product prototypes.

This is the top of the stack, where users directly interact with the AI. The application is the user interface and the business logic that connects the user’s request to the underlying foundational model.

-

Chatbots: An application like ChatGPT is the user-facing interface built on top of the GPT foundational model. It takes your prompt, sends it to the model for processing, and displays the response back to you.

-

Integrated Assistants: Microsoft Copilot is an application integrated into products like Microsoft 365. It sends user requests to a foundational model (like GPT-4), but the user interacts with the AI within the context of their document or email, making it a seamless experience.

-

Generative Tools: Applications that use foundational models to generate images, videos, or code based on a user’s prompt.

How Products like ChatGPT, Gemini, and Copilot Fit In

You may be wondering where popular tools like OpenAI’s ChatGPT, Google’s Gemini, Microsoft’s Copilot, and Anthropic’s Claude fit into this hierarchy. The answer is they exist in the Foundational Models and Front-End Applications layers.

-

Foundational Models: At their core, ChatGPT, Gemini, and Claude are all large language models (LLMs). They are the “brains” of the operation, trained on vast amounts of text and code. They are the engine that understands, reasons, and generates human-like language.

-

Front-End Applications: The user-facing tools we interact with are the applications. The website you visit to use ChatGPT is an application built on top of the GPT foundational model. Similarly, the Gemini web interface and Copilot, which is integrated into Microsoft Office, are applications that give users access to the power of these models.

This is a crucial distinction. Companies can either build their own foundational models (like OpenAI and Google) or use an existing one to create a new application (like Microsoft with Copilot). This two-layer structure allows for incredible innovation, as developers can focus on creating new and useful applications without having to spend billions on training a model from scratch. Microsoft’s partnership with OpenAI, for example, is a prime example of a symbiotic relationship, where Microsoft provides the cloud infrastructure and capital, and OpenAI provides the cutting-edge models that power Microsoft’s new applications.

As Andrew Ng, a co-founder of Google Brain, famously said, “AI is the new electricity.” Just as electricity powered a revolution in the last century, AI is set to power the next.

References:

-

IBM. “What is an AI Chip?”.

-

Google Cloud. “Vertex AI Platform”.

-

Ada Lovelace Institute. “What is a foundation model?”.

-

Cerebras. “Wafer-Scale Engine”.

-

Apiumhub. “The Best Frontend AI Tools Developers Should Know”.

-

Stanford Institute for Human-Centered AI. “AI Index Report”.

-

OpenAI. “GPT-4o”.

-

Google. “Introducing Gemini”.

-

Microsoft. “Introducing Copilot”.

-

Anthropic. “Claude 3”